competitiveness in business life and recently also in public sector organisations. Today it even has its own international journal dedicated to both practical and scientific debate. In spite of this interest, benchmarking’s benefits as a research method has not yet been utilised. Therefore Metodix approaches benchmarking with three articles. This first article introduces its roots and content. The second article clarifies how it could be used as a case study and the third article delineates how it could be used in the field of action research.

Short History of Benchmarking

Throughout history, people have developed methods and tools for setting, maintaining and improving standards of performance. Many examples from industrial history may be considered as prehistorical forms of benhcmarking. For example, in the early 1800’s British textile industry had a leading position in the world. A remarkable American industrialist, Francis Lowell, decided to travel to England where he studied the manufacturing techniques and industrial design of the British mill factories. He noticed that even though factory equipment appeared to be sophisticated there was room for improvement in the way British plant layouts utilized labour. Using technology very similar to what Lowell had seen in Enland, he built a new plant in the U.S. This factory functioned in a less labour intensive fashion (Bogan & English 1994, 2-4).

There are several different opinions about where the term “benchmarking” originates from. Benchmarking’s metaphorical and linguistic roots lie in the land surveyor’s term where a benchmark has been a distinctive mark made on a rock, wall, or building etc. Andersen & Pettersen (1996) give three examples behind the birth of this term. One of them claims that the term originates from geographical surveying, where a benchmark is a topological reference point in a terrain that has been used in determining one’s current position. The position of other points are located with reference to this benchmark. According to another idea, the term originates from the sale of fabrics, where the stores used to have (and often still have) a ruler sunk into the counter to measure fabric. Thus, measures could be taken against a chosen benchmark. The third explanation claims that benchmarks have been used in fishing contests, where the size of the fish had been measured on a bench by making a mark in the bench with a knife.

It should be noticed both conceptually and terminologically that there is a distinction between benchmarking and benchmark. According to Bogan & English (1994, 4) benchmarking is an on-going search for the best practices that produce superior performance when adapted and implemented in one’s organization. Emphasis should be placed on the continuous elements of benchmarking.

In most cases benchmarking activities relate to quality improvement and have, in fact, developed together with quality management.

Today benchmarking has established its position as a tool to improve organization’s performance and competitiveness in business life. Recently it has also extended its scope from large firms to small businesses and public as well as semi-public sectors (e.g. Ball 2000, Davis 1998, Jones 1999, Kulmala 1999, McAdam & Kelly 2002). The current state of benchmarking offers excellent challenges for scientific research both as a phenomenon and as a research method. This article focuses on its forms and conceptual bases serving as a start for both of these purposes.

Defining the concept of benchmarking

Conceptual elements of benchmarking

The definitions and classifications of benchmarking vary between scholars according to the time and criteria they focus on. For example Kulmala (1999) identified in his dissertation that benchmarking refers basically to the process of evaluating and applying best practices that provide possibilities to improve the quality. According to Bhutta and Huq (1999, 255) “benchmarking is first and foremost a tool for improvement, achieved through comparison with other organizations recognised as the best within the area”. On the other hand, Ahmed and Rafiq (1998) argue that the central essence of benchmarking is the learning how to improve activities, processes and management.

These elements of evaluation and improvement of practices by learning from others are embedded in different forms of benchmarking regardless of the definer (e.g Ball 2000, Büyüközkan & Maire 1998, Carpinetti & Melo 2002, Comm & Mathaisel 2000, Elmuti & Kathawala 1997, Fernandez & al. 2001, Longbottom 2000, Prado 2001, Watson 1993, Yasin 2002, Zairi & Whymark 2000a and b). The target, bases and nature of definitions, however, have changed in the course of time.

Evolutionary approach to benchmarking

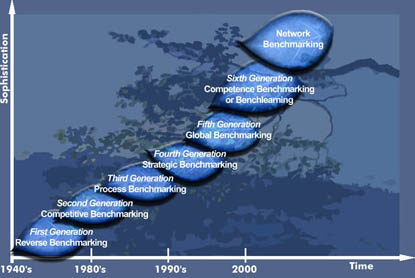

Watson, in fact, suggests that benchmarking is an evolving concept that has developed since the 1940’s towards more sophisticated forms. He proposes that it has undergone five generations. (Watson 1993).

The first one titled as “reverse engineering” was product oriented, comparing product characteristics, functionality, and performance of competitive offerings. Most authors, however, position this generation to developments taking place in Rank Xerox at the turn of the 1980’s, when the company started to use benchmarks in order to compare itself to competitors as well as to reference points within their own organization.

The second generation “competitive benchmarking” involved comparisons of processes with those of competitors.

Third, process benchmarking was based on the idea that learning can be made from companies outside their industry. Hence sharing information became less restricted and non-competitive. But at the same time it required more in-depth understanding and needed to understand similarities in processes, which on the surface appear different.

The fourth generation, in the 1990’s introduced strategic benchmarking, involves a systematic process for evaluating options, implementing strategies and improving performance by understanding and adopting successful strategies from external partners. Typical to this perspective is, continuous and long-term development and the aim to make fundamental shifts in process. With fifth generation this was complemented by global orientation. (Ahmed & Rafiq 1998)

Originally developed by Watson, further modified by Ahmed & Rafiq 1998, 288 and finally supplemented by Kyrö 2002.

Figure 1: Different generations of benchmarking (Kyrö 2002)

Looking at the most recent studies this evolutionary path has received a newcomer – competence or learning benchmarking. The basic philosophy behind competence benchmarking is the idea that the foundation of organizational change processes lies in the change of actions and behaviour of individuals and teams. Karlöf & Östblom (1995) use the term benchlearning, which also refers to a cultural change in efforts to becoming a learning organization. Organizations can improve their effectiveness by developing competences and skills and by learning how to change attitudes and practices. (Kulmala 1999)

Even though learning as a part or as a core of benchmarking has walked all along the evolutionary development, towards the end of 20th century its nature, scope and shape had changed. Previously the focus was on model learning and, as Bhutta and Huq (1999) suggest, with problem-based orientation. The contemporary tendency is more process-oriented. It also aims to find solutions for “how questions” rather than focusing on “what questions” i.e. how things happened and how to apply them within organizations. Most recent studies also expand learning from individual and group level towards collective learning aiming to influence on organization culture (e.g. Zairi & Whymark 2000 a and b). Even though the term competence or learning benchmarking is still rarely mentioned, this change is evident in the contents of many authors (e.g. Ball 2000, Bhutta & Huq 1999, Comm & Mathaisel 2000, Elmuti & Kathawala 1997, Jones 1999, Fernandez & al. 2001, Zairi & Whymark 2000 a and b).

The idea of learning from others was accompanied by learning with others. This takes place within the organizations as well as between organizations. A recent example of this is Prado´s article (2001) of Spanish companies. It describes business networking for experience sharing in quality. The sizes of companies varied and they came from different industries, but they had a mutual problem. This might be a promising opening for perhaps still a new form of benchmarking, namely network benchmarking.

Advantages of networking can be reflected through the criticism the model learning has received. Longbottom (2000) raises the dilemma between copying competitors and gaining competitive advantage through distinctive performance. On the other hand Davis (1998) proposes, that especially in the public sector, instead of benchmarking antique practices, it would be better to invent new ones. Another argument against model learning relates to the changing environment (e.g. Hammer & Champy 1993). The idea is that imitating the existing practices is too slow and incremental. Consequently there is a need for faster and more radical approaches. Senge (1990) describes this difference as adaptive and generative learning. Adaptive learning in benchmarking aims to identify the practices that help in adapting to changes. Generative benchmarking addresses its attention to the innovative solutions and possibilities in order to create for the future.

From that perspective changes in learning and its orientation do not only extend the scope of benchmarking into internal learning processes, but also affect on the benchmarking partners. Generative solutions for future excellency are more flexible in this respect. Contemporary competition is not so heavily focused, but rather the mutual problem for the future. This motivates and allows experience sharing in networks.

On the other hand networks have established themselves also as a business structure on a local, regional, national as well as international level. This complicates choosing both participants and partners. Instead of one single unit or organization benchmarking might involve a network on both sides as participants and as partners.

Finally when benchmarking has expanded its context from private sector into public- and semi-public sectors we face new challenges. The basic nature of public services is not to compete with each other, but rather they have been established in order to provide best possible services as effectively and efficiently as possible. If one organization succeeds in providing excellent solutions it is suppose to be open for others as well. The focus is more on cooperation rather than on competition. This concerns also international arenas. Of course this becomes more complicated when public and private sector’s practices are mingled and they for example, offer similar services with genuine competition.

The need for generative, future oriented solutions, the structural changes in business as well as other organizations, the emergence of public sector organizations with their specific problems are all aspects that support the idea that networking benchmarking might be a new type of benchmarking in the future. These aspects do not easily find their natural place within contemporary forms of benchmarking, thus there is a need to extend the benchmarking definition.

Compared to Watson’s model of generations the most recent studies of benchmarking seem to provide two new approaches – competence and network generations. Thus we have seven different options that provide raw material for different classifications.

Classifying benchmarking

Different authors have divided or combined these generations of benchmarking according to different criteria e.g. aim, focus and/or, the bases and target of comparison. The basic claims behind these efforts are that from the benchmarker s perspective different forms are not mutually exclusive but rather complementary and that both the form and its content are context-bound and thus it should be chosen and customised for each purpose. (E.g. Bhutta & Huq 1999, Carpinetti & Melo 2002, Elmuti & Kathawala 1997, Longbottom 2000, Fernandez & al. 2001). The term a benchmarker refers here to the organization or the unit, which wants to improve its activities by learning from or with others.

For that purpose Bhutta and Huq (1999) introduce an integrated matrix with two dimensions; to what is compared and what the comparison is being made against. They suggest that each combination in the matrix should be evaluated according to its relevance into three categories high, medium and low. They also provide general grading for each combination. (See table 1)

Table 1. The matrix of different forms of benchmarking

Source: Bhutta & Huq 1999, 257, originally adapted from Leibfried and Mcnair 1992

Bhutta and Huq (1999, 257) define performance benchmarking as a comparison of performance measures for the purpose of determining how good the company is as compared to others. Process benchmarking concerns methods and processes aiming to improve the processes of the company. Finally strategic benchmarking is needed when the company aims to change its strategic direction and the comparison relates to how the strategy is made.

As comparison partners Bhutta and Huq use organization itself (=internal), competitors , own industry or technology (functional ) and finally best practices regardless of industry (=generic).

Compared to the evolutionary classification the matrix seems accurate as far as the focus is on the first four generations. It covers their essential features and advances the classification. When it comes to the most recent developments, however, it encounters some problems. It is hard to find a consistent way to position the global, competence and the option of networking benchmarking within this frame. Most efforts result in inconsistencies and in overlapping concepts. Also the definitions might turn out to be inadequate for describing the actual problems and a nature of benchmarkers. Using only two dimensions for classifying the whole field thus appears insufficient. Also when the number of dimensions and possible options increase the scaling of low, medium and high relevancies might turn out to be inadequate too, simply because some of the specified options might end up unnecessary or excluding one another. These difficulties course inconsistencies within and between different forms of benchmarking indicating that classifying benchmarking as an integrated approach is indeed a complex task. Taking into account the most recent developments and also the need for positioning and evaluating a specific case within this field requires thus rethinking and some modifications. Next will be made an effort in order to go forward with this aim.

From classification towards profiling

An effort to advance Bhutta and Huq’s categorisation follows two criteria. First it attempts to cover the different forms of benchmarking as extensively as possible. Second it aspires to hold the consistency between and within categories as much as possible. This aims to allow the use of categorisation for two purposes; first mapping the possibilities for conceptualising and second for positioning and evaluating specific cases within the field of benchmarking.

In order to follow both suggested criteria the two-dimensional model is insufficient, since actually there are three dimensions, or rather factors, in each form of benchmarking. These are a benchmarker referring to who is benchmarking, a target referring to what to benchmark and a partner referring to with whom or against what to benchmark. From now on I will use the term “a partner” as referring to both against or with what to benchmark and to those specific organizations or units benchmarking is taking place against/with. Usually a partner refers to the latter. The context reveals which one is in question.

Less attention has been addressed to the first one, the benchmarker. In the early phases benchmarking focused on private sector organizations and there was no need to problematise that. Consequently most part of the literature and research still use business-oriented concepts such as a firm, a corporation or an enterprise. However the recent developments challenges us specify explicitly the structure and the scope of a benchmarker. This need emerges since both benchmarkers’ structures and the content of benchmarking have became more complex. For that purpose we have three basically different options i.e. an organization, a unit in the organization and a network. In this context the concept of an organization refers to a firm, a public- or a semi-public organization. A unit is a context bind and can be defined according to the organization in question. This division solves the problem of defining the network from the benchmarker’s perspective. Since these three options are not complementary, there is no need to scale them. Conceptually organization in management literature can refer to any organized structure, thus covering also the structure of a network or even the whole economy or regional alliance like the EU. In this respect a narrow, specific meaning for an organization is used here.

The global benchmarking brought us another category, i.e. geographical scope. It should be taken into account both from a benchmarker’s and a partner’s perspective in order to hold the conceptual, internal consistency.

It might be useful to define it more specifically than just as a global option i.e. as local, regional, national, specific geographical area or alliance or global. The relevancy of this division might have been minor in the history of benchmarking when benchmarkers were mainly large firms, and the tendency to form regional alliances was not a focal issue as it currently is. Also the emergence of small firms in benchmarking might make a difference in this respect, since for them it is relevant to make more specified distinctions between geographical areas. Geographical scope also profiles and guides the choices for further categories. Thus it is needed in order to follow our second aim; to allow positioning and evaluating specific cases within the field of benchmarking.

Since the benchmarkers might have either future or current preferences or the relevance of their current activities varies in different geographical areas, these categories are not exclusive, but rather complementary. Thus the scaling of relevancy is needed.

For the second factor, the target, it is also hard or even impossible to find a solution that either follows Watson’s idea of evolution towards more sophisticated forms of benchmarking, or that would consist of exclusive categories. Therefore it is divided to five different targets;

- Performance

- Technology

- Process

- Competence

- Strategic

Relationships between these are embedded in the scaling. For example if we choose a process that closely relates to the performance produced through these processes, we grade both of theses options, however perhaps with different relevance.

In order to cover the qualities of different forms of benchmarking as extensively as possible, we have to also give a more detailed profile to the partner i.e. to define its structure, nature and geographical scope. When it comes to the structure and the geographical scope, it is logical to apply benchmarker’s divisions. As far as the nature of a partner is concerned it is possible to employ Bhutta and Huq’s categories with a slight modification. It concerns the functional benchmarking, that according to their view, involves both their own industry and technology. This classification, however, regarded technology as a target, while the industry clearly relates to the partner.

On the other hand industry is a tricky concept that has recently been complemented with such concepts as a sector or a cluster. This reflects complex changes that have taken place in the whole supply and competition environments. Therefore this could be taken into account also in the classification by extending the content of this category to cover them as well. Since these categories consistently proceed from a smaller to a more extensive context, there is no need for scaling, but a benchmarker can choose one of these.

Table 2. The categories of benchmarking

*) Scaling of relevance: 1. Non relevant, 2. low, 3. medium 4. high

Thus we have three factors and six categories containing different options that covers most recent developments of benchmarking and also seems to be internally and inter-relationally consistent. In order to cover different benchmarking forms it wasn’t possible to find absolute internal consistency within the options in three categories. Scaling, however, solved this problem. (see Table 2) This frame differs from the matrix introduced by Bhutta and Huq helping us to plan, position and evaluate specific benchmarking cases.

It should be pointed out, however, that the factors and the categories presented in table 2, are not chronological but rather interactive as e.g. Bhutta and Huq (1999) suggest. Thus when it comes to choosing and defining each benchmarking case and evaluating and reflecting its outcomes, this should be taken into account.

Figure 3 The interaction of benchmarking factors

It was argued that this new, more specific classification or rather a tool for profiling benchmarking, facilitates the positioning and evaluating specific benchmarking cases. Consequently it could be assumed that it also might help in conducting the actual process of benchmarking.

In order to attain the purpose of mapping the possibilities for conceptualising necessitates specifying definition from these categories. Starting from the general aspects of benchmarking and complementing them by following table 2 we arrive at this definition:

“Benchmarking refers to evaluating and improving an organization’s, its units’ or a network’s performance, technology, process, competence and/or strategy with chosen geographical scope by learning from or/and with its own unit, aother organization or a network that is identified as having best practices in its respective field as a competitor, as an operator in the same industry, cluster or sector or in the larger context with chosen geographical scope.”

Even though this definition is a bit clumsy compared to some of the more general definitions, it depicts the current outlook of this field and most certainly will evolve in the future as we can learn from the history of benchmarking.

From conceptualisation to scientific research

Benchmarking has been used mainly as a practical tool to improve organisation’s performance. Therefore within the field of scientific research it has been rather a content (i.e. phenomenon) than a method. The conceptual frame constructed here can be used besides the practical purposes also as a starting point for both kinds of research; conducting scientific research from the content of benchmarking as well as developing methods of benchmarking. For the later aim we have prospects in two directions; first as a specific kind of case method and second as an action research approach. The process of conducting a research differs between these two, even though both of them benefit from the studies concerning the process of benchmarking. Therefore we suggest that the researcher first identify the research approach s/he wish to apply and only then start to ponder how to proceed with her/his study. In order to make this choice the reader can first use the general descriptions in Metodix provided by Prof. Lukka for a constructive research approach, Prof. Aaltio-Marjosola for case study and Prof. Suojanen for action research and then the specific articles for both of these.

Kyrö, Paula & Kulmala, Juhani 2004. The Roots and the Content of Benchmarking. www.metodix.com. Menetelmäartikkelit

References

Ahmed P.K. & Rafiq M. 1998: Integrated benchmarking: a holistic examination of select techniques for benchmarking analysis. Benchmarking for quality management & technology volume 5 number 3 1998 pp. 225-242

Andersen, P. & Pettersen P.-G. 1996: The Benchmarking Handbook: Step-by-Step Instructions. London: Chapman & Hall.

Babicky, J. 1996: TQM and the role of CPAS in industry. The CPA Journal, 66(33), 69-70.

Ball A. 2000: Benchmarking in local government under a central government agenda. Benchmarking: An International Journal. Vol. 7 No. 1 2000 pp. 20-34.

Bhutta K.S. & Huq F. 1999: Benchmarking – best practices: an integrated approach. Benchmarking: An International Journal. Vol. 6 No.r 3 1999 pp. 254-268

Bogan & English 1994: Benchmarkin gfor Best Practices. Winning through innovative adaptation. McGrow – Hill. Inc.

Büyüközkan G. & Maire J-L. 1998: Benchmarking process formalization and a case study. Benchmarking for Quality, management & technology Vol. 5 No. 2 1998 pp. 101-125.

Camp, R.C. 1989: Benchmarking. The Search for Industry Best Practices That Lead to Superior Performance. Milwaukee, Wisconsin: ASQC Quality Press.

Carpinetti L.C.R. & de Melo A.M. 2002: What to benchmark? A systematic approach and cases. Benchmarking. An International Journal, Vol. 9 No. 3. 2002. Pp. 244-255. MCB UP Ltd.

Comm C.L. & Mathaisel D.F.X. 2000: A paradigm for benchmarking lean initiatives for quality improvement. Benchmarking: An International Journal. Vol. 7 No. 2 2000 pp. 118-127. MCB University.

Davis P. 1998: The burgeoning of benchmarking in British local government. Benchmarking for quality management & technology volume 5 number 4 1998 pp. 260-270.

Elmuti D. & Kathawala Y. 1997: An overview of benchmarking process: a tool for continuous improvment and competitive advantage. Benchmarking for Quality, Management & Technology. Vol. 4 No. 4 1997. Pp. 229-243. MCB University Press.

Fernandez P., McCarthy P.F. & Rakotobe-Joel T. 2001: An evolutionary approach to benchmarking. Benchmarking: An International Journal. Vol. 8 No. 4 2001 pp. 281-305. MCB University.

Hammer M. & Champhy J. 1993: Re-engineering the corporation: A manifesto for business revolution. Brealey. London.

Holmström & Eloranta 1995: From uncertainty to controlability,learning and innovation. In Rolstadås A. (1995) ed. Benchmarking tehory and practice. Chapman &

Hall. ?

Jones R. 1999: The role of benchmarking within the cultural reform journey of an award-winning Australian local authority. An International Journal. Vol. 6 No. 4 1999 pp. 338-349. MCB University.

Karlöf, B. & Östblom, S. 1995: Benchmarking. Tuottavuudella ja laadulla mestariksi. Gummerus.

Kulmala J. 1999: Benchmarkingin ammatillisen aikuiskoulutuskeskuksen toiminnan kehittämisen välineenä. Acta Universitatis Tampe-rensis 663. Tampere 1999

Kyrö P. 2002: Benchmarking Nordic statistics on woman entrepreneurship. Jönköping International Business School. Publication under the printing process.

Longbottom D. 2000: Benchmarking in the UK: an empirical study of practitioners and academics. Benchmarking: An International Journal. Vol. 7 No. 2 2002 pp. 98-117. MCB University.

McAdam R. & Kelly M. 2002: A business excellence approach to generic benchmarking in SMEs. Benchmarking: An International Journal. Vol. 9 No. 1 2002 pp. 7-27. MCB University.

Prado J.C.P. 2001: Benchmarking for the development of quality assurance systems. Benchmarking: An International Journal. Vol. 8 No. 1 2001 pp. 62-69. MCB University.

Sauer, S. & Petrie, R. (1996). Benchmarking best practices can boost WC. National Underwriter. 100 (14), 10-28.

Senge P. 1990: The Fifth discipline. The Art and Practice of the Learning Organisation. London: Century Publishers.

Spendolini, M.J., 1992, The Benchmarking Book, AMACOM, New York, NY.

Watson, G.H., 1993: Strategic Benchmarking: How to Rate your Company’s Performance against the World’s Best, John Wiley and Sons Inc, New York, NY.

Yasin M.M. 2002: The theory and practice of benchmarking: then and now. Benchmarking: An International Journal. Vol. 9 No. 3 2002 pp. 217-243. MCB University.

Zairi M. 1992: Competitive benchmarking. An Executive Guide. TQM. Practitioner Series. Technical Communications Publishing Ltd.

Zairi M. & Whymark J. 2000 a: The transfer of best practices: how to build a culture of benchmarking and continuous learning- part 1. Benchmarking: An International Journal. Vol. 7 No. 1 2000 pp. 62-78. MCB University.

Zairi M. & Whymark J. 2000 b: The transfer of best practices: how to build a culture of benchmarking and continuous learning- part 2. Benchmarking: An International Journal. Vol. 7 No. 2 2000 pp. 146-167. MCB University.

Kategoriat:artikkeli, Artikkelit

Jätä kommentti